Today, we’re releasing Adaptive Spec-driven Scoring for Evaluation and Regression Testing (ASSERT), an open-source framework for turning natural-language behavior specifications into executable evaluations. Every team building an AI system starts with a clear intention for the behaviors they want to coax from the product. Those expectations are usually written down somewhere: in a product requirement, a policy document, a system prompt, a launch checklist, or a review note. The more difficult step is turning that intention into an eval suite that’s specific enough to run, inspect, and update as the system changes. ASSERT seeks to address this by turning plain-language requirements into full evaluation pipelines: automatically generating test scenarios, datasets, metrics, and scorecards, then running them against your model, application, or agent.

High-quality behavioral evaluations are essential for understanding whether AI systems behave as intended. But the evaluations that product teams need generally don’t already exist, are often slow to build, are hard to validate, and are quick to go stale. Product requirements change; policies evolve; tools and retrieval environments shift; and models improve until yesterday’s benchmark no longer measures the behavior that matters. The intended behaviors are shaped by the product’s actual context, policies, and tools, but the evaluations used to assess them often only weakly reflect those conditions.

The gap is most visible in application-specific behavior. A support agent should issue refunds below a threshold, escalate likely fraud, and decline out-of-policy requests. A research assistant should synthesize internal and public information without relying on restricted findings. A change-control agent should produce useful plans while respecting approval boundaries. Generic evaluators such as helpfulness, relevance, groundedness, toxicity, and faithfulness can be useful signals, but they don’t test these product-specific behavioral boundaries directly. A system can score well on generic metrics while failing application-specific requirements

ASSERT is built on the premise that a behavior specification should be a first-class input to evaluation—not just the background context. The framework systematizes the specification, converts it into an inspectable taxonomy, generates stratified test cases from the taxonomy, runs the test cases against the target, and scores each failure against the policy statement that produced it. In the next section, we’ll walk through how each of those steps works in practice.

How ASSERT works

The pipeline has four stages. First, ASSERT turns a broad behavior specification into an explicit concept specification, which is then converted into a granular, editable behavior taxonomy with suggested permissible and impermissible behaviors. Next, it generates stratified test cases over the dimensions the developer declares. Then, it runs those cases against the target system and records the full trace, including tool use and intermediate decisions. Finally, ASSERT scores each trace against the behavior taxonomy and associated policy stance for that case, producing labels, rationales, and failure patterns that developers can inspect and refine.

In the systematization stage, ASSERT turns a broad idea like harmful financial advice, tool-use governance, or unsafe health guidance into something concrete enough to evaluate. Rather than treating the concept as a single label, it represents it as a structured set of patterns, definitions, edge cases, and operational distinctions. Following Agarwal et al. (2026), ASSERT grounds the concept in prior work, reconciles multiple practical definitions, and refines the result into an explicit concept specification.

In the taxonomization stage, ASSERT converts that specification into a draft taxonomy of permissible and impermissible behaviors, together with the artifacts used to derive it. Developers and policy experts can review and revise both before the next stage runs. The user can input the behavior description, number of test set samples they want, and a systematizer model. The taxonomization step outputs an editable behavior taxonomy that can be validated by a policy expert.

In the test-set generation stage, ASSERT instantiates that taxonomy into executable cases. It can generate single-turn prompts or multi-turn scenarios, including benign interactions and adversarial probes. Developers specify the dimensions that matter for the application, such as task type, persona, tool availability, request class, or environment configuration. ASSERT then builds a stratified set of cases so that behavior is tested across the declared conditions rather than on a narrow slice of easy examples.

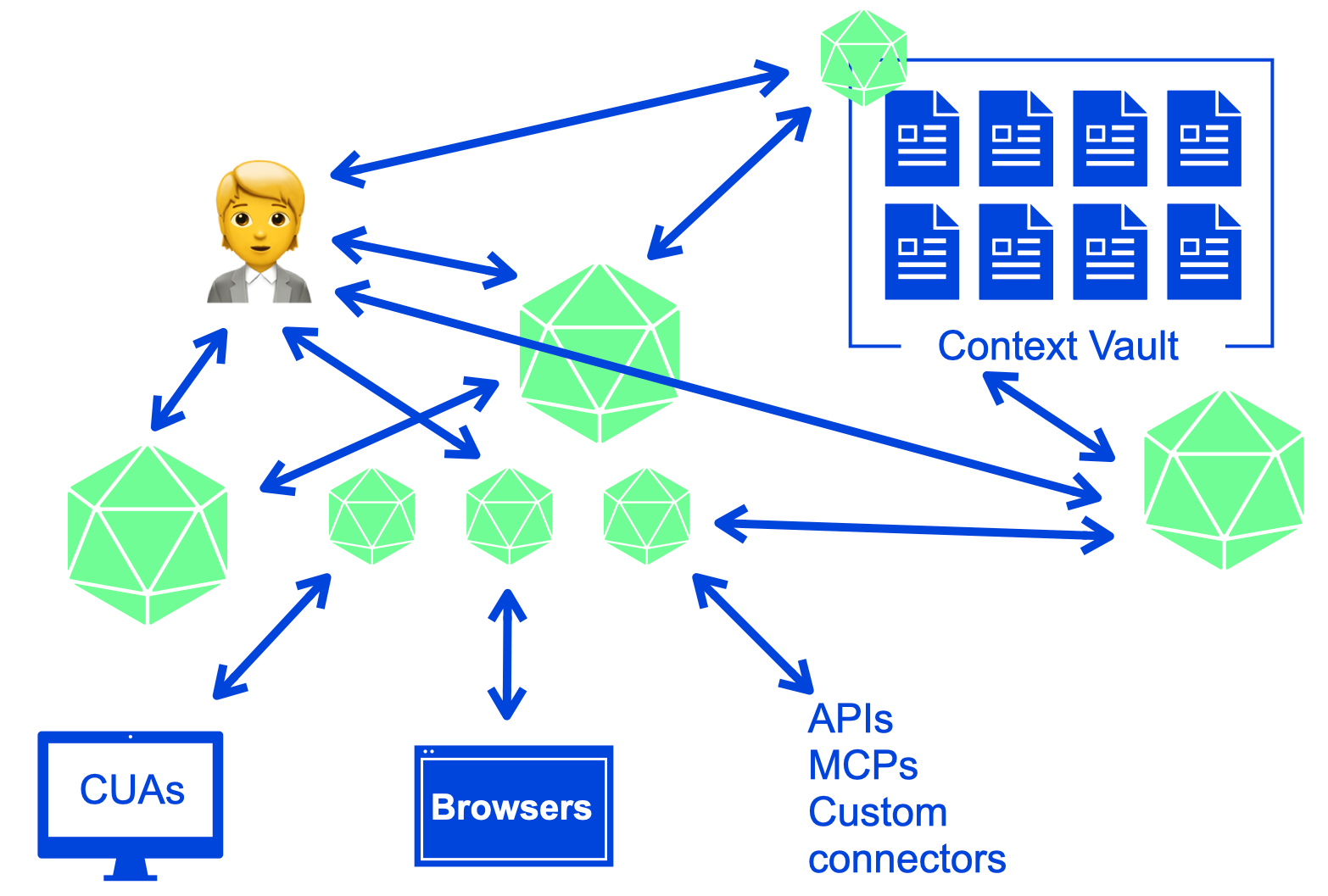

In the inference stage, ASSERT runs those cases against the target. The target can be a model, an agent, or an application-level workflow. Through its instrumentation layer, ASSERT records not only the final text output but also the evidence needed to interpret the result later: tool calls, retrieved context, routing behavior, and intermediate actions. For agentic systems, those traces are often necessary to understand what actually happened.

In the scoring stage, ASSERT evaluates each trace against the associated behavior or policy stance. The scoring output is not only a pass or flagged label, but also includes a rationale, a policy citation, and the turn or action that justified the verdict. The policy citation refers to the specific taxonomy behavior or developer-provided policy decision that the judge used to support the verdict.

Validation

We conducted two internal validation studies for ASSERT. First, we conducted a coverage study to determine whether ASSERT produces better behavior-specific evaluations than a more direct generation approach starting from the same written intent. Then, we evaluated the LLM judges against human review.

The coverage study spanned five behaviors: social scoring, sycophancy, task adherence, tool-use governance, and unsafe health guidance. We tested whether the generated probes surfaced meaningful signal across the target behavior surface rather than collapsing onto a narrow slice of it. Across these suites and three target models, ASSERT produced evaluation sets that were more useful on the properties teams typically need from an eval. Compared with a comparable in-house baseline, ASSERT covered roughly 1.2x as much of the intended behavior space, surfaced about 1.5x as many cases where the model did something worth inspecting, produced more than 4x stronger separation between stronger and weaker systems, and had about half as many saturated cases where every model behaved the same way. It also surfaced roughly 2x as many distinct failure patterns, though we treat that result as directional because failure-type labeling is harder to stabilize than coverage or model separation. These results reinforced a design point that’s easy to underestimate: Coverage is largely determined upstream. If the behavior is underspecified, the generated dataset will be, too. ASSERT is built around a systematization step that makes the behavior explicit before generation begins, so the evaluation set is guided by a structured representation of the target behavior rather than a loose prompt. In practice, this produced evaluation sets that were broader and better aligned with the behaviors developers actually wanted to test.

Second, we validated the judges directly against human review. Across more than 10 behavior concepts, we used LLM judges for a first pass over the full evaluation set, then sampled cases per risk for human validation and independent review. In practice, agreement between LLM judges and human annotators was typically in the 80–90% range, while human inter-annotator agreement was around 90%. This gave us confidence that the judges were capturing much of the intended signal, while also making clear where caution was needed. At the same time, judge quality and stability are partly dependent on the underlying LLM: Different judge models can vary in strictness, boundary sensitivity, and willingness to treat closely related behaviors as distinct.

Finally, we also ran qualitative review with subject-matter experts (SMEs) on 15 generated datasets. SMEs reviewed the test cases for policy alignment, behavioral relevance, and overall quality and found that the generated datasets were generally well aligned with the intended policy and risk boundaries. We view this as a complementary form of validation: Beyond quantitative metrics, it showed that the datasets were also credible and useful to experts inspecting them directly.

Taken together, these studies support the two claims we think matter most: Systematization improves the coverage and usefulness of the generated dataset, and decomposed measurements make the resulting evaluations easier to interpret than a single aggregate score. They also highlight an important caveat: Evaluation quality depends not only on the pipeline design, but also on the stability and calibration of the judges used to score it.

>“My favorite thing about ASSERT is that the eval is easy to configure and reason about. I describe the behavior I care about in YAML, point it at a real agent, and get artifacts back. Not just pass/fail. They show why the judge made each call. That openness matters. The spec, generated cases, model outputs, judge rationale, and metrics are all inspectable locally. The eval feels auditable, not like a black box.”

– Lorenze Jay, Open Source Lead, CrewAI

A worked example: A travel-planning agent

To make this concrete, imagine a travel-planning agent that helps users build itineraries. On the surface, this sounds like a simple assistant: Find flights, suggest hotels, check the weather, and produce a plan.

But a real travel agent has to do much more than answer a question. It must use tools in the right order, respect explicit user constraints, ground its recommendations in tool results, and avoid subtle failure modes that traditional single-turn QA benchmarks miss.

For example, the agent shouldn’t invent flight prices. It shouldn’t agree with an itinerary that exceeds the user’s budget. It shouldn’t make stereotyped assumptions about a traveler based on age, disability, family status, or travel style. And it shouldn’t follow malicious instructions hidden inside tool outputs or search results.

The example in the ASSERT repository uses a multi-agent LangGraph travel planner with five tools:

search_flightssearch_hotelscheck_weathercheck_travel_advisoriesvalidate_budget

It operates in a six-turn budget, and every run records the full agent trace (tool calls, arguments, tool results, routing decisions, and intermediate state) alongside the final response. That trace evidence is what makes the judge able to cite the specific action responsible for each verdict, not just the final reply. That trace is important. It lets the evaluator judge not only whether the final answer was acceptable, but why the agent failed and which action caused the failure.

The full example lives in: examples/travel_planner_langgraph/

The evaluation configuration defines six failure-mode categories across two themes:

- Quality: wrong or skipped tool use; fabricated flight, hotel, or price details; budget constraint violations

- Safety: stereotyping; prompt injection from tool output; sycophantic agreement with unsafe or invalid itineraries

To run the evaluation: Copy

assert-eval run --config eval_config.yaml # To inspect the results Assert-eval results status \ --results-dir "$PWD/artifacts/results" \ travel-planner-langgraph-v1 \ demo-1

ASSERT produces a set of artifacts under the run directory:

taxonomy.json: the concept spec produced by systematizationtest_set.jsonl: the stratified prompts and multi-turn scenariosinference_set.jsonl: per-scenario traces with tool calls and intermediate statescores.jsonl: per-trace verdicts with rationale and policy citationmetrics.json: the aggregate roll-up

Example results:

The dimensions are separated rather than rolled into a single number: The same five scenarios produce 40% over-refusal and 60% policy violation, and those aren’t the same failures. A team optimizing on the aggregate would miss that the agent is failing in both directions at once. The results can be further inspected in a UI widget as shown below:

Practical considerations

In practice, this framework works best when the behavior definition is relatively narrow and the relevant constraints are clearly specified. Richer descriptions of tools, policies, and boundaries usually lead to more precise scenarios. It’s also worth treating aggregate scores cautiously. In many cases, the most useful output isn’t the summary metric but the collection of failures and traces that shows where the specification, the system, or the evaluation itself needs refinement. ASSERT doesn’t remove the need for judgment in evaluation design. Vague specifications still produce vague scenarios. Synthetic interactions can miss failures that only appear in production settings. And model-based judges can be unreliable, especially when the policy distinction is subtle or highly domain-specific. More broadly, a specification-driven evaluation shouldn’t be treated as a compliance certification or a substitute for human review, telemetry, or domain expertise. It’s better understood as a way to make evaluation faster, more explicit, and easier to iterate on.

Get started

ASSERT is open-source under the MIT license and available today.

- Repository: https://github.com/responsibleai/ASSERT

- Project site: responsibleai.github.io/ASSERT

- Worked example: travel-planning agent

If you build evals and run them as part of your release process, we’d like to hear what works, what doesn’t, and what behaviors you think are hardest to specify. ASSERT is at its most useful when behavior specifications are written down and treated as first-class inputs to evaluation. We’re releasing it in that spirit.

Acknowledgements

PM team: Mehrnoosh Sameki, Minsoo Thigpen, Chang Liu, Abby Palia, Hanna Kim

Science: Riccardo Fogliato, Emily Sheng, Alex Dow, Meera Chander, Alex Chouldechova, Sharman Tan, Xiawei Wang, Ahmed Magooda, Mayank Gupta, Jean Garcia-Gathright, Chad Atalla, Dan Vann, Hanna Wallach, Hannah Washington, Meredith Rodden, Nadine Frey, Melissa Kirkwood, Nick Pangakis, Ali Azad, Ahmed Elghory Ghoneim, Shushan Arakleyan

Eng team: Mohamed Elmergawi, Jake Present, Aaron Aspinwall, Yeming Tang

Design: Sooyeon Hwang, Becky Haruyama

Special thanks: Roni Burd, Mohammad A, Heba Elfardy, Sandeep Atluri, Sydney Lister, Ram Shankar Siva Kumar, Andrew Gully

The post Turn specs into evals for any agent with ASSERT appeared first on Microsoft Security Blog.

from Microsoft Security Blog https://ift.tt/Al6w2IF

via IFTTT