This ongoing GenAI Docker Labs series will explore the exciting space of AI developer tools. At Docker, we believe there is a vast scope to explore, openly and without the hype. We will share our explorations and collaborate with the developer community in real-time. Although developers have adopted autocomplete tooling like GitHub Copilot and use chat, there is significant potential for AI tools to assist with more specific tasks and interfaces throughout the entire software lifecycle. Therefore, our exploration will be broad. We will be releasing things as open source so you can play, explore, and hack with us, too.

Generative AI (GenAI) is changing how we interact with tools. Today, we might experience this predominantly through the use of new AI-powered chat assistants, but there are other opportunities for generative AI to improve the life of a developer.

When developers start working on a new project, they need to get up to speed on the tools used in that project. A common practice is to document these practices in a project README.md and to version that documentation along with the project.

Can we use generative AI to generate this content? We want this content to represent best practices for how tools should be used in general but, more importantly, how tools should be used in this particular project.

We can think of this as a kind of conversation between developers, agents representing tools used by a project, and the project itself. Let’s look at this for the Docker tool itself.

Generating Markdown in VSCode

For this project, we have written a VSCode extension that adds one new command called “Generate a runbook for this project.” Figure 1 shows it in action:

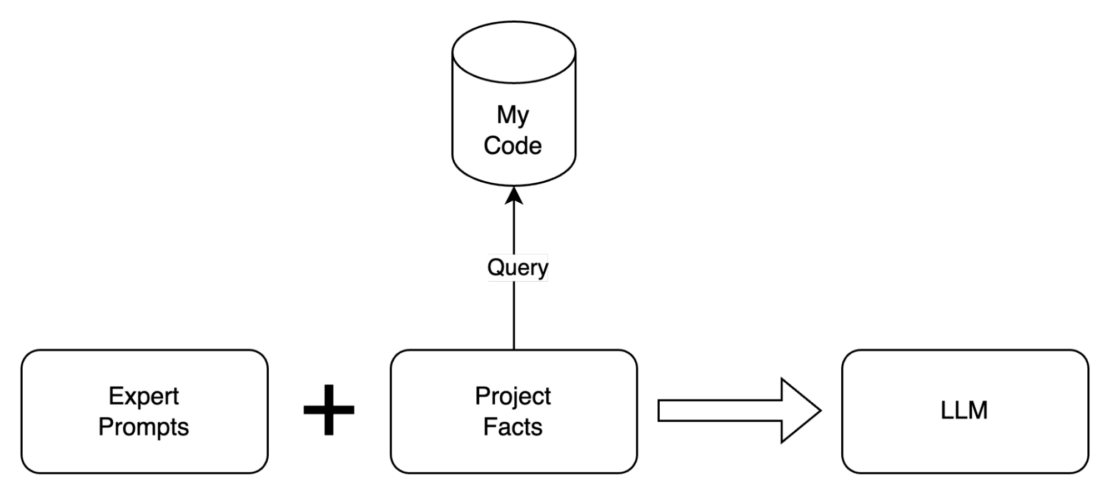

This approach combines prompts written by tool experts with knowledge about the project itself. This combined context improves the LLM’s ability to generate documentation (Figure 2).

Although we’re illustrating this idea on a tool that we know very well (Docker!), the idea of generating content in this manner is quite generic. The prompts we used for getting started with the Docker build, run, and compose are available from GitHub. There is certainly an art to writing these prompts, but we think that tool experts have the right knowledge to create prompts of this kind, especially if AI assistants can then help them make their work easier to consume.

There is also an essential point here. If we think of the project as a database from which we can retrieve context, then we’re effectively giving an LLM the ability to retrieve facts about the project. This allows our prompts to depend on local context. For a Docker-specific example, we might want to prompt the AI to not talk about compose if the project has no compose.yaml files.

“I am not using Docker Compose in this project.”

That turns out to be a transformative user prompt if it’s true. This is what we’d normally learn through a conversation. However, there are certain project details that are always useful. This is why having our assistants right there in the local project can be so helpful.

Runnable Markdown

Although Markdown files are mainly for reading, they often contain runnable things. LLMs converse with us in text that often contains code blocks that represent actual runnable commands. And, in VSCode, developers use the embedded terminal to run commands against the currently open project. Let’s short-circuit this interaction and make commands runnable directly from these Markdown runbooks.

In the current extension, we’ve added a code action to every code block that contains a shell command so that users can launch that command in the embedded terminal. During our exploration of this functionality, we have found that treating the Markdown file as a kind of REPL (read-eval-print-loop) can help to refine the output from the LLM and improve the final content. Figure 3 what this looks like in action:

Markdown extends your editor

In the long run, nobody is going to navigate to a Markdown file in order to run a command. However, we can treat these Markdown files as scripts that create commands for the developer’s edit session. We can even let developers bind them to keystrokes (e.g., type ,b to run the build code block from your project runbook).

In the end, this is just the AI Assistant talking to itself. The Assistant recommends a command. We find the command useful. We turn it into a shortcut. The Assistant remembers this shortcut because it’s in our runbook, and then makes it available whenever we’re developing this project.

Figure 4 shows a real feedback loop between the Assistant, the generated content, and the developer that is actually running these commands.

As developers, we tend to vote with our keyboards. If this command is useful, let’s make it really easy to run! And if it’s useful for me, it might be useful for other members of my team, too.

The GitHub repository and install instructions are ready for you to try today.

For more, see this demo: VSCode Walkthrough of Runnable Markdown from GenAI.

Subscribe to Docker Navigator to stay current on the latest Docker news.

Learn more

- Subscribe to the Docker Newsletter.

- Read Docker, Putting the AI in Containers.

- Read the AI Trends Report 2024: AI’s Growing Role in Software Development.

from Docker https://ift.tt/XVfrC4N

via IFTTT

No comments:

Post a Comment